Machine learning systems are only as reliable as the tests that validate them. Now that we’ve discussed data bugs, let’s shift focus to testing the behavior that emerges from that data.

In this post, we explore one of the most essential techniques in the ML testing toolkit: regression testing. It’s a practical way to improve reliability and maintain consistent performance as your models evolve.

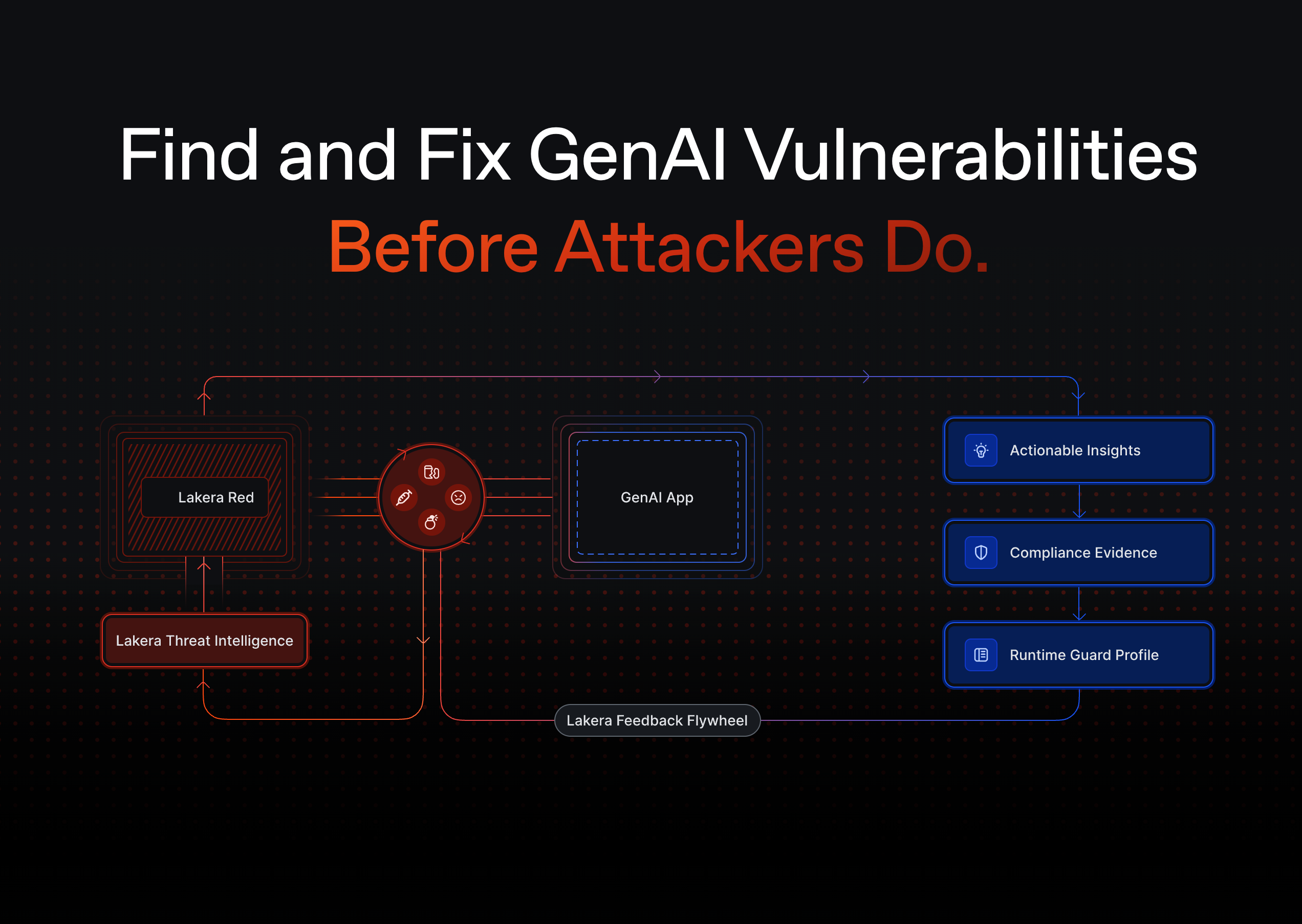

Regression testing isn’t enough for GenAI. Add dynamic testing and red teaming with Lakera Red.

The Lakera team has accelerated Dropbox’s GenAI journey.

“Dropbox uses Lakera Guard as a security solution to help safeguard our LLM-powered applications, secure and protect user data, and uphold the reliability and trustworthiness of our intelligent features.”

-db1-

If you’re building or iterating on LLM-based systems, these reads cover the types of failures that regression testing can help you catch—before they hit production:

- Prompt-based regressions are among the hardest to track—this guide to prompt injection explains why.

- See how subtle phrasing changes can bypass safety layers in this direct prompt injection overview.

- Jailbreak prompts often resurface after model updates—this LLM jailbreaking guide walks through real examples.

- Understand how poisoned data can silently break downstream model behavior in our training data poisoning guide.

- Learn how to spot degraded performance in real time with this LLM monitoring strategy.

- For output-level safety testing, this post on content moderation helps ensure your model doesn’t regress in harmful ways.

- And to catch vulnerabilities before users do, explore this approach to AI red teaming.

-db1-

What Is Regression Testing?

In traditional software development, regression testing refers to:

“…re-running functional and non-functional tests to ensure that previously developed and tested software still performs after a change.” [1]

Let’s say you find a bug, fix it, and want to make sure it doesn’t return in future versions. The solution? Add a test for it. That way, if the bug ever reappears, the test will catch it immediately. That’s the essence of regression testing.

Why Regression Testing Matters in Machine Learning

In machine learning, bugs can reappear after something as routine as retraining your model. This is especially likely when your datasets are constantly evolving.

ML regression testing helps you catch these issues. It ensures that your model keeps meeting baseline performance requirements, even as data and parameters change.

A Simple Way to Get Started

Each time your ML system fails on a tricky input, add that example to a “difficult cases” dataset. Use it as a regression test set that becomes part of your testing pipeline. Over time, you’ll build a valuable resource to track whether performance on known weak spots is improving—or breaking again.

Real-World Example: Olympic Integrity

Imagine you’ve built a computer vision system to detect whether runners stay in their lane during races.

The system performs well in cloudy conditions. But on sunny days, it misinterprets a runner’s shadow as the runner stepping out of bounds, triggering a false disqualification alert. That’s an ML bug.

Here’s how regression testing can help:

- Collect similar images where shadows confuse the model.

- Add them to a dedicated regression dataset.

- Retrain the model with improved data.

- Regularly test your model on both the standard test set and the regression set.

By doing this, you can catch regressions early and prevent the same bug from recurring in future updates.

Proactive ML Testing with Regression Sets

Regression testing doesn’t have to be reactive. It’s also a great proactive strategy for monitoring ML performance over time—especially in real-world deployments.

Say you’re deploying your model across multiple customer sites. How do you make sure it works equally well everywhere?

By building targeted regression datasets for key scenarios (e.g., different lighting conditions, locations, or user groups), you can track how performance holds up under each context.

Let’s look at how Tesla approaches this.

Regression Testing in Practice: The Tesla Approach

In 2020, Andrej Karpathy, Tesla’s Director of AI, shared how the company uses large-scale regression testing to validate its autopilot system [2].

Tesla has developed an advanced testing infrastructure that can:

- Automatically create test sets for specific scenarios.

- Mine edge cases from fleet-collected data.

- Continuously evaluate system behavior at scale.

They don’t just react to bugs—they design regression sets to proactively stress-test the system.

You Don’t Need to Be Tesla

You can apply similar principles on a much smaller scale.

For example, in the Olympic runner use case, you could create mini regression datasets for:

- Male vs. female athletes.

- Red vs. blue running tracks.

- Bright vs. cloudy lighting conditions.

Tracking model performance across these subsets will give you ongoing insights—and confidence—into how well your system generalizes.

Final Thoughts

Regression testing in machine learning is a low-effort, high-impact strategy to build more trustworthy models. It helps ensure that progress doesn’t come at the cost of stability.

Start small, stay consistent, and watch your model’s reliability improve over time.

.svg)