Runtime Security for AI Applications and Agents

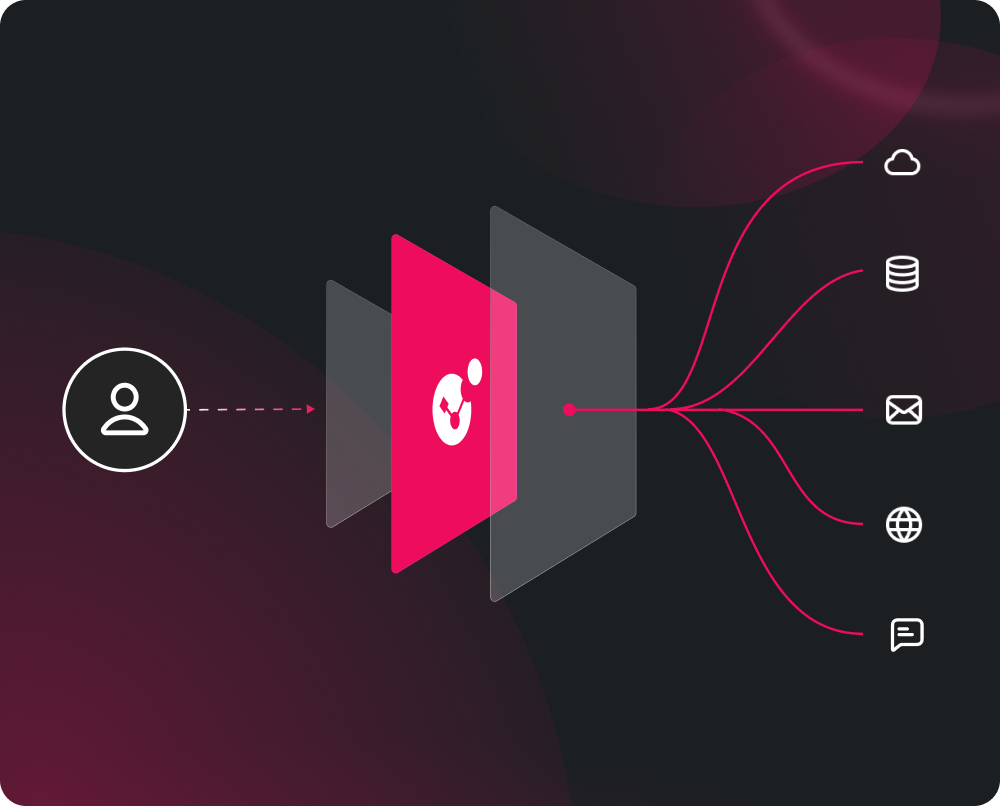

Secure every AI interaction your team builds and deploys: from prompts to outputs to agent actions. Inline enforcement without retraining models or rewriting prompts.

AI systems behave differently and introduce new security gaps

Discover and Govern Your AI Agents

Before you can secure AI agents, you need to understand where they exist and what they can access.

AI Agent Security provides visibility into agent usage and MCP-connected systems across your environment, including agents your teams did not explicitly build or register.

Security built for how AI actually works

AI systems behave probabilistically, act autonomously, and communicate in natural language. Securing them requires controls designed specifically for those properties

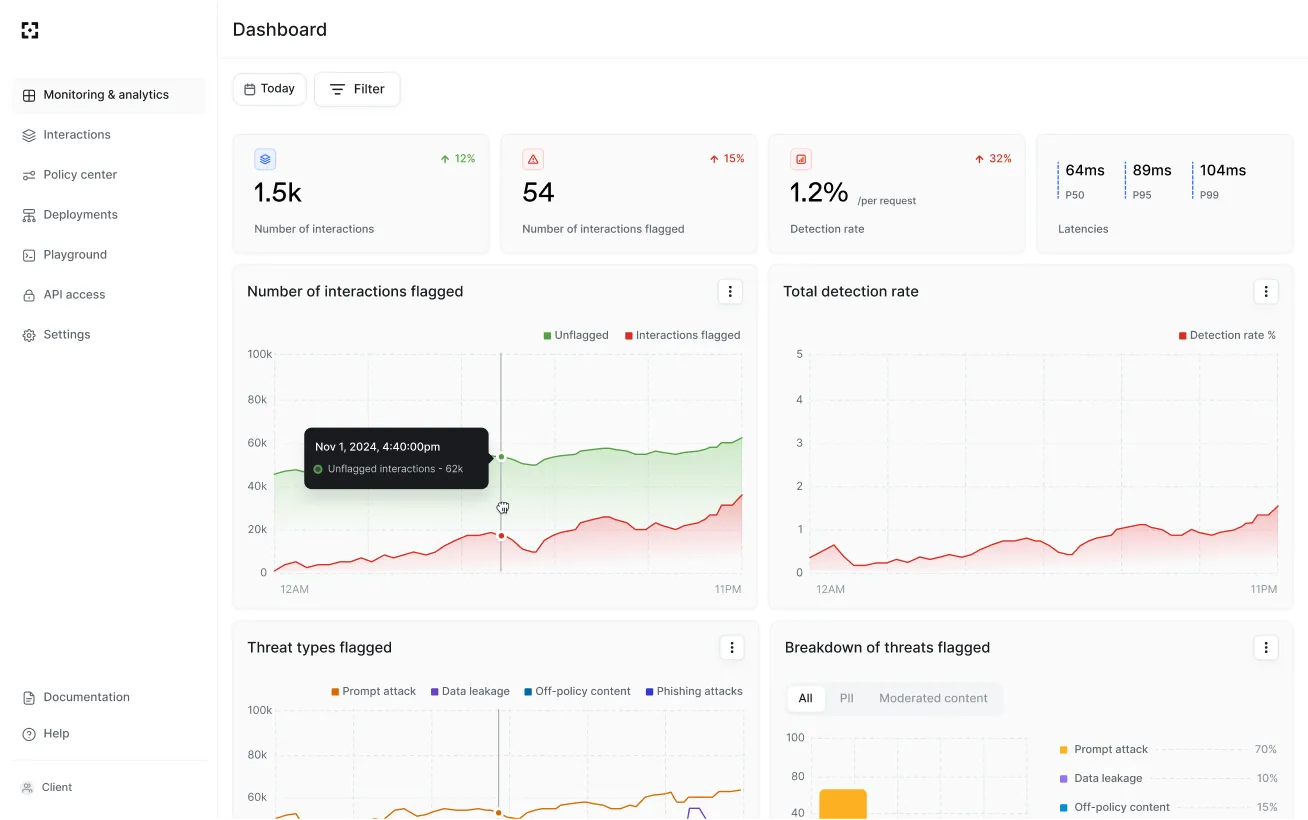

How AI Agent Security works

What AI Agent Security protects against

Adversarial attacks

Prompt injection, jailbreaks, and adversarial instructions blocked before they reach the model.

Data and access risks

Sensitive data exposure in prompts and responses, unauthorized agent access, and gaps in AI interaction visibility.

Safety and policy violations

Harmful or non-compliant outputs, unsafe or unauthorized agent actions, and misuse beyond defined policies.

Where AI Agent Security Fits

MCP-connected systems

Blocks indirect injection through connected tools before agents act on compromised instructions

Applications

Identify safety and security failure modes before AI features and copilots reach production.

Agents

Contain unsafe actions, tool abuse, andconnected system risk at runtime.

.svg)