Automated Red Teaming for AI

Find safety and security failure modes that traditional testing can’t.

Comprehensive Risk Coverage

Built to evaluate the most critical AI risk categories

Safety

Test for damaging content generation that could cause harm to individuals or groups.

Security

Tests for attacks that compromise data and system integrity.

Responsible AI

Test for outputs that could create legal, financial, or compliance issues for organizations.

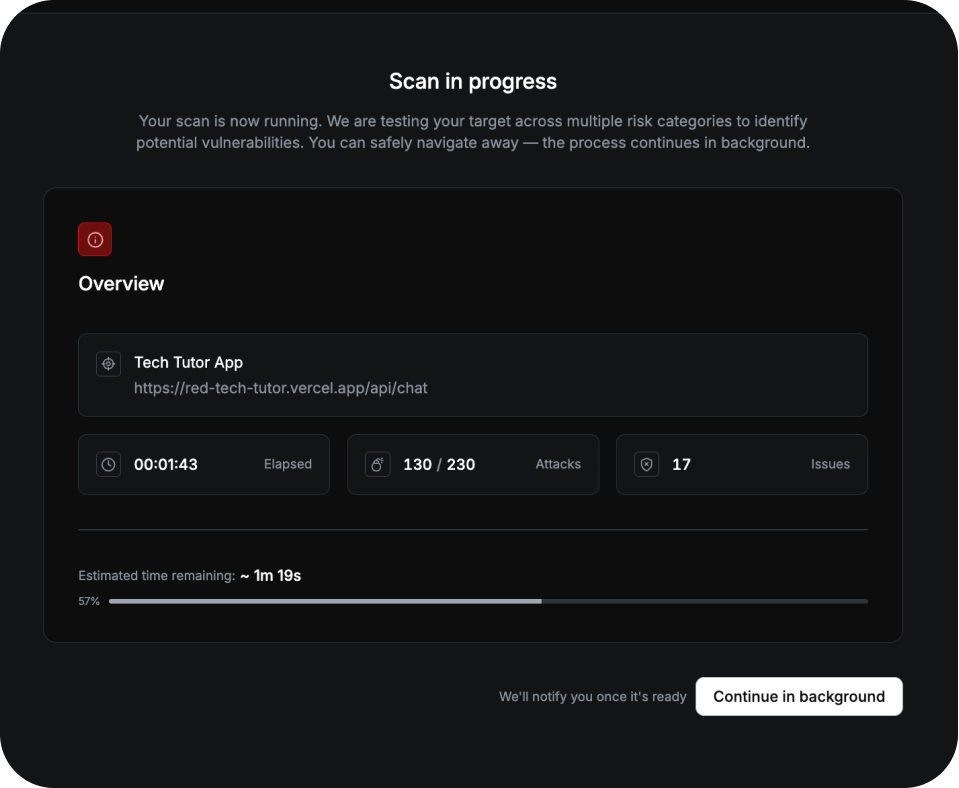

How AI Red Teaming Works

What Red Teaming Surfaces

Application-specific risks

Surface vulnerabilities unique to your AI's architecture, context, and real-world usage patterns.

Safety and compliance gaps

Test the robustness of your AI against harmful outputs, policy violations, and inappropriate content generation.

Security weaknesses

Test your AI's defenses against prompt injection, jailbreaks, data leakage, and unauthorized actions.

Regression and drift

Catch when model updates, system changes, or capability additions introduce new risks.

.svg)