We can now add another testing technique to our Swiss Army knife for ML testing: Fuzz testing. Let’s start by defining what fuzz testing is and by providing a quick overview of the common approaches.

Then, we’ll look at how this method can be used to efficiently stress test your ML system and to help uncover robustness issues during development.

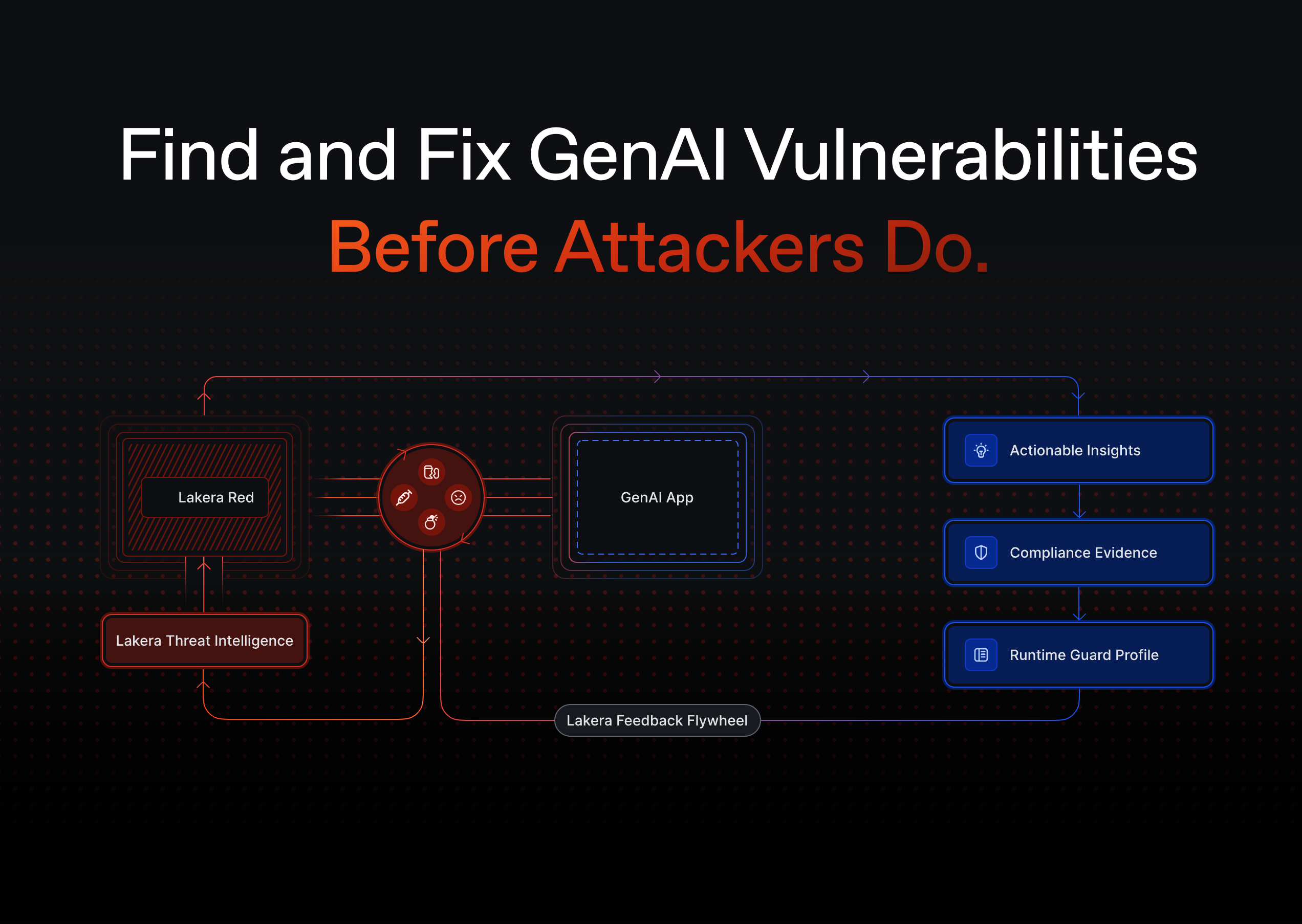

Fuzzing helps—but attackers use natural language, not random noise. Explore how Lakera simulates real-world threats.

The Lakera team has accelerated Dropbox’s GenAI journey.

“Dropbox uses Lakera Guard as a security solution to help safeguard our LLM-powered applications, secure and protect user data, and uphold the reliability and trustworthiness of our intelligent features.”

-db1-

If you’re exploring fuzz testing for GenAI systems, these reads provide essential context on attack types, risk detection, and how fuzzing fits into a broader security strategy:

- Understand what kinds of vulnerabilities fuzz testing uncovers in this guide to prompt injection.

- See how structured fuzzing can expose weaknesses in direct prompt injection handling.

- Learn how fuzzing reveals jailbreak edge cases in this post on LLM jailbreaking.

- Poor data hygiene creates exploitable logic paths—this training data poisoning guide explains the upstream risks.

- Monitor the impact of fuzzing inputs in real-time with this practical guide to LLM monitoring.

- For output-based safety evaluations, pair fuzz testing with content moderation to detect harmful completions.

- And for full-spectrum vulnerability assessment, AI red teaming complements fuzz testing with human-guided tactics.

-db1-

What is fuzz testing?

Fuzzing is a well-known technique extensively used in traditional software systems. Wikipedia defines it as follows:

“Fuzzing or fuzz testing is an automated software testing technique that involves providing invalid, unexpected, or random data as inputs to a computer program.”

Software bugs often appear when problematic inputs are presented to the system. If the logic behind the computer program was not written with these problematic inputs in mind, the software component can crash or behave in undesired ways. Fuzz testing looks for problematic inputs by following an automatic input generation strategy. Thus, problematic input data can be caught early, and the overall system becomes more reliable.

This idea extends naturally to computer vision and other ML systems. In particular for computer vision, the input space is extremely large. Problematic input data are likely to exist, but the relevant ones can be hard to find.

“For computer vision, the input space is extremely large. Problematic input data are likely to exist, but the relevant ones can be hard to find.”

We’ll begin by explaining how fuzz testing works and by providing a few examples from research. Then, we’ll look in more detail at how it can be used to test computer vision systems in practice.

How can we smartly generate new inputs?

If the core component of fuzz testing is finding problematic inputs, the key question becomes: how do we go about actually finding these new inputs? These particular inputs that cause the machine learning models to misbehave?

The first idea is, of course, to use a fully random search. We could generate inputs by modifying pixel values randomly until something breaks. However, this has several shortcomings. First, it is very inefficient because finding relevant failure cases can be difficult and expensive.

Secondly, what does ‘until something breaks’ mean? Machine learning testing is difficult, ML systems often fail silently. Relevant bugs are subtle and challenging to find and don’t usually lead to a program ‘crashing’. The ML system may instead decide that a dog in an image is now a cat without raising any alarms.

ML systems often fail silently. Relevant bugs are subtle and challenging to find and don’t usually lead to a program ‘crashing’.

Finally, the input may quickly become semantically meaningless in the context of the application, thus going beyond where the system is expected to perform well. It could be interesting to still test for such inputs.

Why? Here is an example, a camera might break or a bad connection might lead to random-looking images. In this case you still want the system to fail gracefully.

Interlude: How do you know if your system is failing?

Before we continue, let’s take a look at how to evaluate whether the machine learning system is actually failing. The concept of metamorphic relations developed in the previous blog of this ml testing series becomes very useful for this purpose. These are variations to the input image that change a known label in a predictable way. For example, often the output of a classification problem should not depend on how the image is rotated: a rotated dog is still a dog.

This notion can be used as a tool for fuzz testing. As long as the operations performed to modify an input lead to well-understood changes in the label, we can establish whether a new input ‘breaks’ the system.

A few examples of fuzz testing techniques

To apply fuzz testing successfully, we need to be more efficient than a fully random search. Most approaches are based on the idea of mutating an initial input based on a specified set of rules and operations.

DLFuzz, for example, focuses on the idea that problematic inputs tend to appear due to low neuron coverage in the trained system. New images that turn on a large subset of neurons that are not activated during training may then lead to unexpected model predictions. DLFuzz modifies input images to activate these rarely visited neurons in order to trigger such failures.

Another approach, DeepHunter, chooses a set of random transformations among a set that preserves image labels. This way, whether a newly generated fuzzy input decreases performance can be evaluated with the original image label. Indeed, if we modify an image by randomly rotating it and expect the labels to remain the same, we can compare the output of the system. The newly rotated images can be checked with the original image labels to decide if there is a failure.

How can fuzz testing be used to test ML systems?

Fuzz testing becomes an essential component to add to our testing suites. It allows us to stress test the system and get a clearer idea of how the system will perform in practice by leveraging a larger, synthetic dataset. Fuzzy stress testing gives us access to images that are likely to arise in practice but are not in the original dataset. Test on much larger datasets using fuzzy stress testing.

Test on much larger datasets using fuzzy stress testing.

Example: Surveying and energy site.

Let’s say that you were building a machine learning system for a robot that was designed to survey a renewable energy site. Data availability often becomes a core challenge when building such systems. It is true we may have enough general images taken on rainy days, and images of wind turbines on sunny days. But images of turbines on rainy days may be scarce.

For complex real-world systems, it’s often impossible to have sufficient coverage for all scenarios that arise in practice. As such, data augmentation techniques are key and a standard go-to technique for anyone building computer vision systems or machine learning models in general.

Fuzz testing can stress test the system within its operational environment to find combinations where the system performs weakly. It can also help to find where further augmentations or data collection should be done.

– Adding random synthetic fog to the image at intensity x.

– Adding random glare of intensity y at position p.

Input images of roundabouts could be fuzzily mutated using these transformations to look for failure cases. This process is very powerful since it can provide a large number of images that may arise in practice but are not present in the existing dataset. More inspiration on how this can be done through a guided approach can be found in DeepHunter.

By running such tests repeatedly throughout the development process, teams can ensure that their systems work as specified. They can also find problematic cases that need further data augmentation and data collection.

Prepare for the unexpected with fuzz.

Fuzz testing can also help to answer the broader question:

– Does my system perform well when presented with ‘unexpected’ images that lie outside of what could be considered its operational environment?

Development teams should ensure that their ml models fail gracefully in such cases.

The complexity of a typical neural network makes it vulnerable to a variety of failures. This includes failures on images that are close in pixel space to images for which the trained models performs well. This has been extensively researched in the field of adversarial examples.

Trivial sanity checks, such as testing how machine learning models perform on partially blacked-out images and other such transforms, are essential. Several open-source libraries, such as Albumentations, provide a wide range of such transforms. Such testing of ml models should, therefore, also be added to the complete testing suite of a critical ML component.

Fuzz testing is an interesting testing method that provides a principled way to stress test your ML system. This is key to understanding whether 1) your system performs well when it should and 2) your system fails gracefully when presented with challenging inputs. The introduced testing methods, allow you to look for the blind spots of your system during development and prevent them from happening during operation.

Get started with Lakera today.

Get in touch with mateo@lakera.ai to find out more about what Lakera can do for your team, or get started right away.

.svg)