For a long time, security had a clear shape. You secured applications. You secured data. You secured infrastructure.

Then AI showed up, and quietly broke that model.

Not because it introduced one new risk, but because it spread risk across everything at once.

Today, AI doesn’t live in a single system. It shows up in how employees work, how applications behave, and how agents act across tools and data. And the moment you look at it that way, something becomes obvious:

There isn’t one place to secure anymore.

The problem: AI risk is fragmented by design

If you try to map where AI risk comes from today, it doesn’t point to a single surface. It comes from human interaction. From probabilistic outputs. From systems that can take action.

Employees use dozens (sometimes hundreds) of AI tools across browsers, desktops, and IDEs. Applications generate outputs in real time that no one explicitly wrote. Agents connect systems, invoke tools, and execute actions, often with delegated access and minimal human oversight.

Each of these looks different. Feels different. Breaks in different ways.

But they all share one thing: they’re hard to see in isolation.

And that’s exactly the issue.

Most organizations are trying to solve AI security as a collection of point problems. A control here. A filter there. A model test somewhere in the pipeline.

But AI doesn’t operate in silos.

So neither can security.

The shift: from point solutions to a control plane

If AI risk spans employees, applications, and agents, then security needs to span them too.

Not as separate tools, but as a single system.

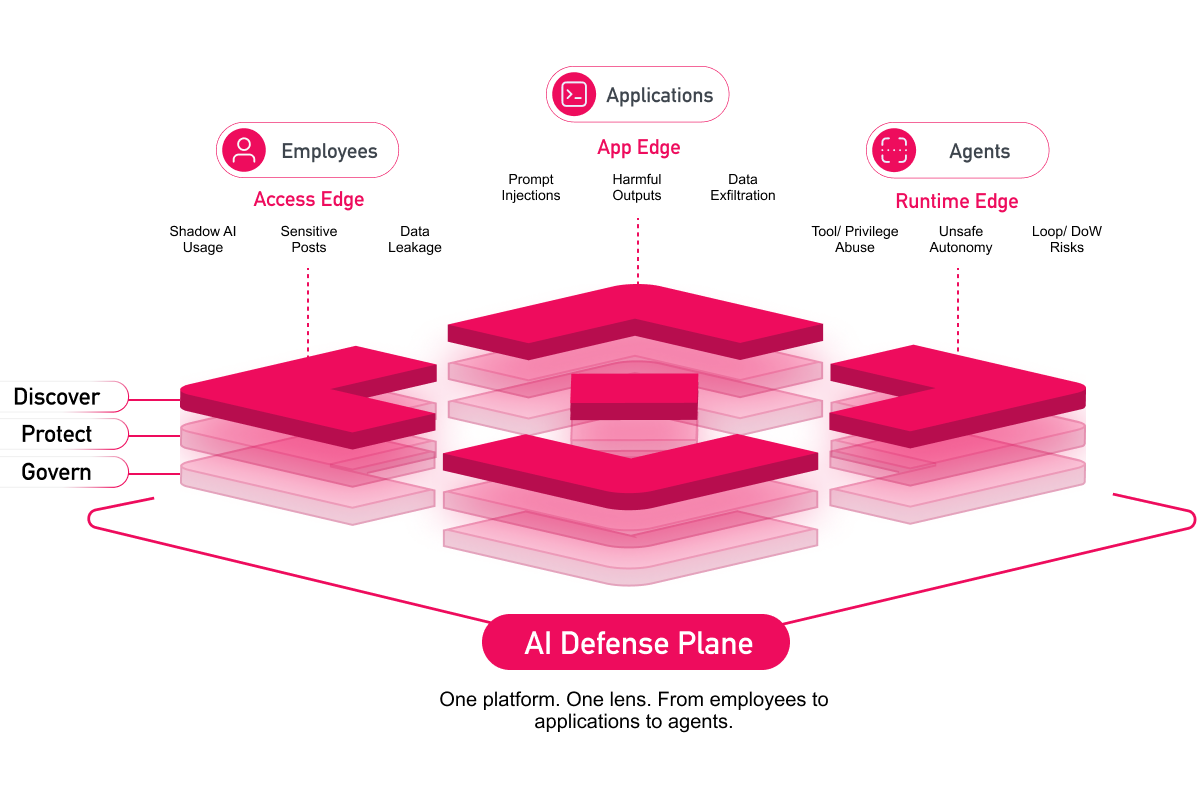

This is the idea behind the AI Defense Plane.

At its simplest, it’s a way to bring everything into one place:

- Visibility into how AI is used across the organization

- Protection enforced at runtime, where AI interactions actually happen

- Governance that applies consistently across users, apps, and systems

Not three different approaches.

One system. One control plane.

That shift matters more than it sounds.

Because once you see AI as a system, not a set of tools, you can start to follow how risk actually moves.

From a prompt… to an application… to an agent action… to a real-world outcome.

Across the full execution lifecycle: from input to action.

The three layers you actually need to think about

If you zoom out, most organizations are already operating across three distinct layers:

-db1-

1. Employees

This is where AI enters the business first.

People using chatbots, copilots, and assistants to move faster. Often outside of visibility. Often without guardrails.

This is where data gets pasted, decisions get influenced, and workflows start to shift.-db1-

-db1-

2. Applications

This is where AI becomes part of the product.

Prompts get assembled dynamically. Context gets pulled in from internal systems. Outputs are generated in real time.

This is also where things like prompt injection and unintended disclosure start to appear, inside flows that traditional controls were never designed to inspect.-db1-

-db1-

3. Agents

This is where AI stops suggesting, and starts doing.

Agents retrieve data, call tools, and execute actions across systems, often with delegated access and minimal human oversight.

A single unsafe instruction can turn into a chain of real consequences.-db1-

And importantly, these layers don’t exist independently. They connect. Data flows between them. Decisions propagate across them. Risk compounds across them. Which is why trying to secure them separately doesn’t really work.

What changes when you treat AI as one system

The AI Defense Plane is really about acknowledging that reality.

Instead of asking, “How do we secure this tool?”

It shifts the question to:

“How do we understand and control how AI behaves across the entire organization?”

In practice, that means a few things.

It means being able to see AI usage end-to-end, not just at the entry point. It means enforcing policy at runtime, where decisions are made, not just where data is stored. And it means correlating signals across employees, applications, and agents so that risk doesn’t get lost between them.

It also means continuously testing how those systems behave under real and adversarial conditions. Because that’s where most problems actually happen.

Not in one place, but in the gaps between them.

Why this matters now

AI has already crossed the line from something that generates output to something that takes action. And once that happens, the cost of getting it wrong changes.

It’s data moving in ways you didn’t expect. Systems behaving in ways you didn’t plan. Actions being taken in ways you didn’t authorize.

At that point, isolated controls stop being enough.

What’s needed is coordination.

Where to start

You don’t need to solve everything at once.

But you do need to start thinking about AI as a system, not a feature.

Start by understanding where AI is already in use. Where it connects to your data. Where it can act on your behalf.

From there, the path becomes clearer.

**Explore how the AI Defense Plane works in practice.

.svg)